Look Mom, NoSQL! - 7. Map Reduce explained

In the fall of 2011 I started a series of blog posts on NoSQL databases. Since then I have developed a conference session on the topic which I presented at DevTeach 2012 in Vancouver in May. In developing the talk I spent a good deal of time trying to understand the concept of Map/Reduce, as it can be a challenging topic.

In the fall of 2011 I started a series of blog posts on NoSQL databases. Since then I have developed a conference session on the topic which I presented at DevTeach 2012 in Vancouver in May. In developing the talk I spent a good deal of time trying to understand the concept of Map/Reduce, as it can be a challenging topic.

As described earlier in this series, NoSQL databases can be partitioned across many systems (or servers). This gives rise to an interesting problem - how to query across these multiple servers. This problem was solved by the concept of Map/Reduce algorithms. Map/Reduce is a multi step querying process, which takes a big task and breaks it down into multiple smaller tasks, and as with many of the NoSQL innovations it was first developed at Google.

The first step is to produce a Map which is executed on each server - you can think of a Map as somewhat akin to an Index in a relational database. Subsequent Reduce steps are executed on each individual server and then on groups of servers using the results of the previous Reduce step to come up with the final answer.

Hopefully, an example will clarify the concept. The example is an expanded version of an example used in Ayende’s blog post on understanding Map/Reduce.

Example Domain

The example domain we are going to use is blog posts. Imagine a site (like Wordpress) that has multiple blogs, each with multiple posts and each post has multiple comments. As there is the potential for an unlimited number of authors the blog posts are distributed across multiple servers in a server farm. We are going to use RavenDB as the database for our example - an example RavenDB JSON document is shown in Listing 1

Listing 1: A typical Blog Post JSON document |

{ "type": "post", "name": "Map/Reduce explained", "blog_id": 3, "post_id": 6, "tags": ["nosql", "map reduce"], "post_content": "", "comments": [ { "source_ip": '127.0.0.1', "author": "john", "text": "Awesome blog post mate" }] } |

The blog_id identifies the blog author, the post_id identifies the post and the blog has an array of comments.

Our example query is to find the total number of comments for each author, summed across all the posts that the author has made.

Applying the Map

The first step is to apply the Map function to the data. Listing 2 shows the Map function we are going to apply.

Listing 2: The Map function to calculate the comment count |

from post in docs.posts select new { post.blog_id, comments_length = comments.length }; |

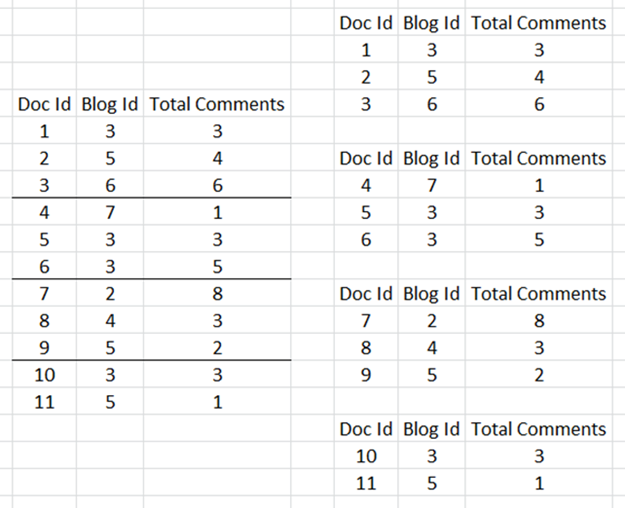

One of the cool things about RavenDB is that its Map and Reduce functions can be defined as LINQ expressions. Figure 1 shows the result of this function to a fictitious data set.

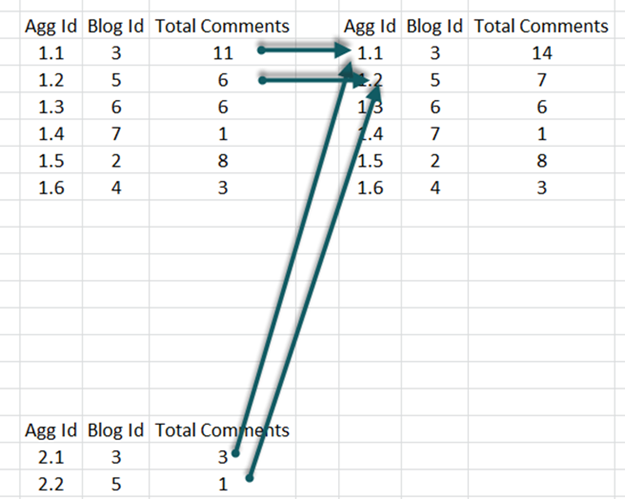

Figure 1: Applying the Map function |

|

In this dataset we have 11 blog posts from 6 authors spread across 4 nodes. The Map function creates a result set with 3 columns - a key (Doc Id), the Blog Id and the number of comments for that post.

Applying the Reduce Function

Now that we have mapped our data, we can start reducing it using a Reduce function to calculate the total number of comments for each of the 6 blog authors.

Listing 3: The Reduce function used to calculate the comment count. |

from agg in results group agg by agg.key into g select new { agg.blog_id, comments_length = g.Sum(x=>x.comments_length) }; |

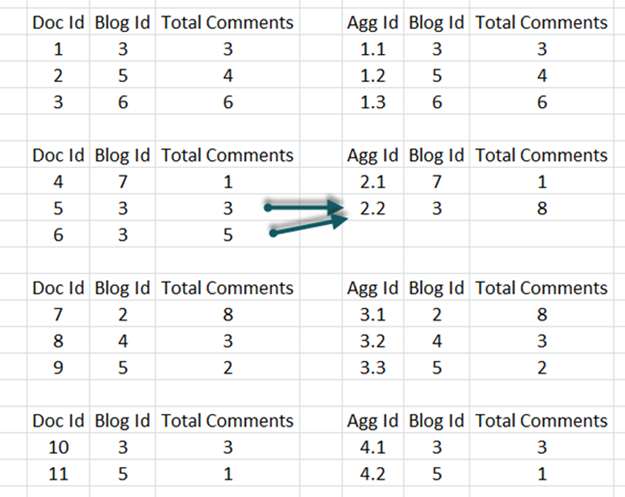

Again, the reduce function is a LINQ expression. It takes the results of the Map function and sums the comments by blog_id. The first step is to apply the reduce function separately to each of the 4 nodes - this is shown in Figure 2.

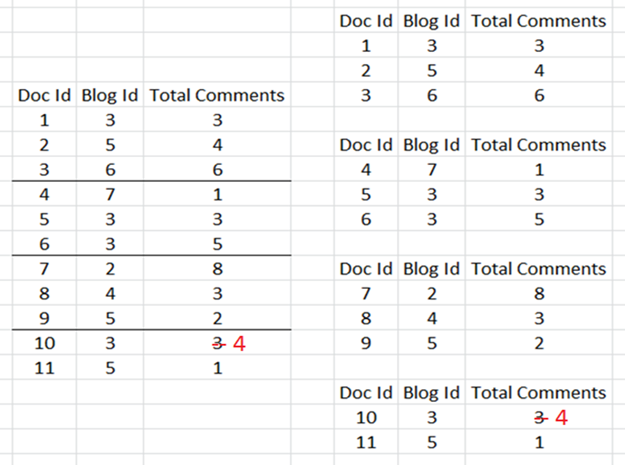

Figure 2: Applying the Reduce function to each node |

|

The application of this function is trivial for three of the nodes as all the blog posts are for different authors, but for the second node blog_id 3 has two posts so the reduce function sums the comments for this author.

One of the features of reduce functions that make them work is that the result set has the same “format” as the initial data, so we can apply the function repeatedly until we come up with the final answer.

To illustrate this, lets assume that the system has been set up to sum across the nodes in groups of three. This is arbitrary, we could get to the final answer in one step by just summing over all 4 nodes, but hopefully this will illustrate the point.

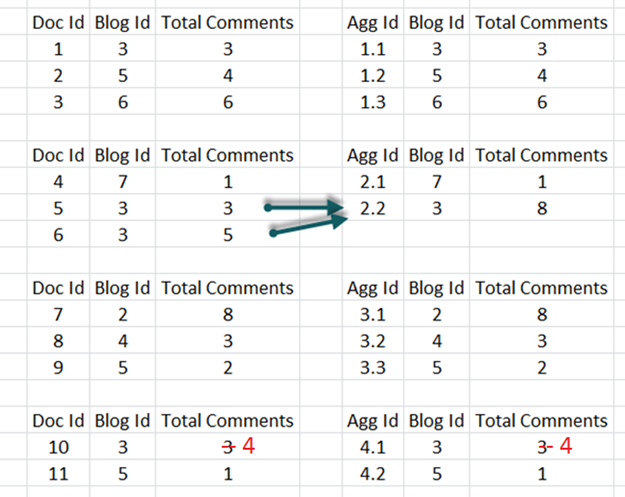

Figure 3: Applying the Reduce function to sum across 3 nodes |

|

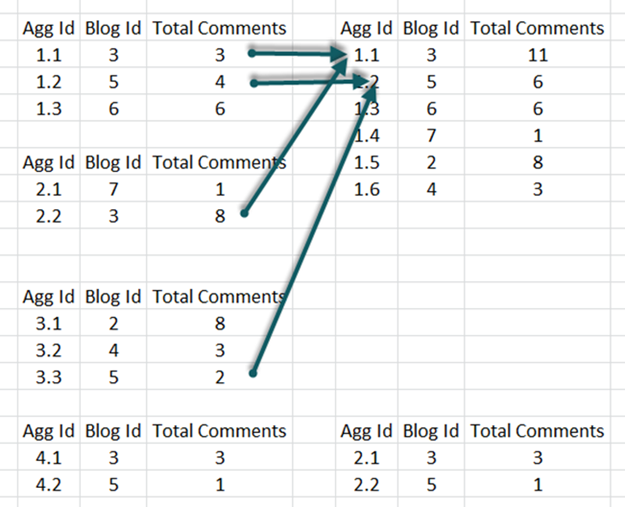

There are now two blog authors that have multiple posts. But we still have two result sets so finally we will apply the reduce function a third time to combine the results of these two sets - see Figure 4.

Figure 4: Applying the Reduce function to get the final answer |

|

We now have our final answer - the total number of comments for each of the 6 authors in our blog system.

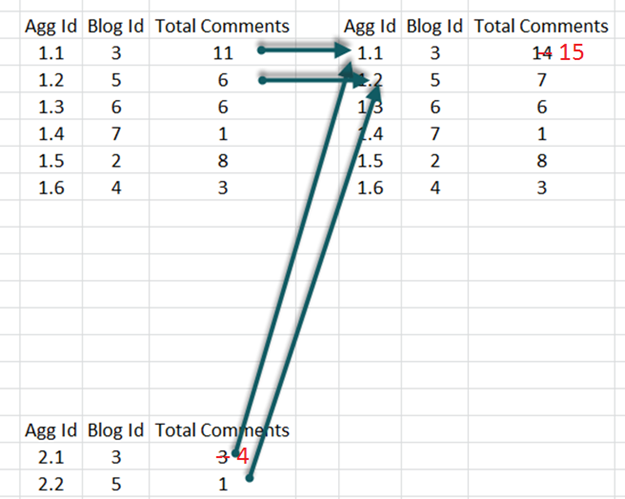

Updating the Results

The number of posts in the blogging system is not static, authors are always posting new blogs and visitors are always posting new comments, and this is where the power of the Map/Reduce functions come in, and hopefully I will show why it can be beneficial to sum over smaller batches of nodes rather than all of them.

In our example one of the blogs receives a new comment.

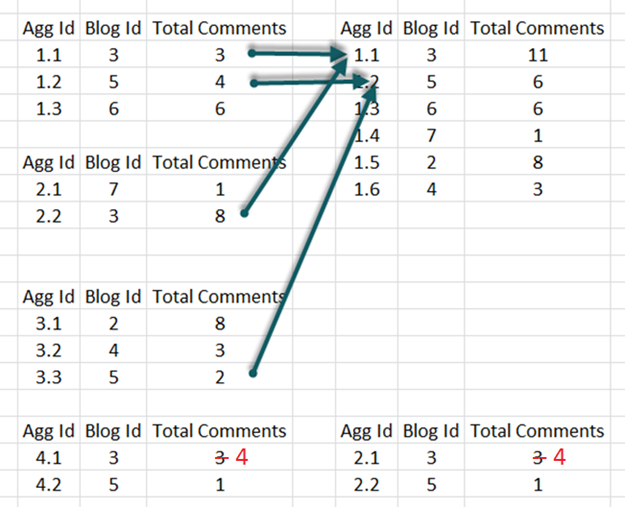

Figure 5: Updating the Results |

|

This just happens to happen on the blog on node 4. The Map function is therefore applied again - but only on the affected node.

Figure 6: Re-applying the first Reduce step |

|

The first Reduce function is also updated, but again only on node 4 (as the other 3 nodes are unchanged).

Figure 7: Re-applying the second Reduce step |

|

The second Reduce function is also updated, but only on group 2 as the first group in unchanged. Finally the final Reduce step is applied to get the updated results - Figure 8.

Figure 8: Re-applying the last Reduce step |

|

Yes, this example was contrived. But it illustrates the point that we don’t need to recalculate everything when one piece of data is updated or added.

Implementing Map/Reduce functions in a real NoSQL system is a little more complex than this trivial example, but hopefully this illustrates the concept.